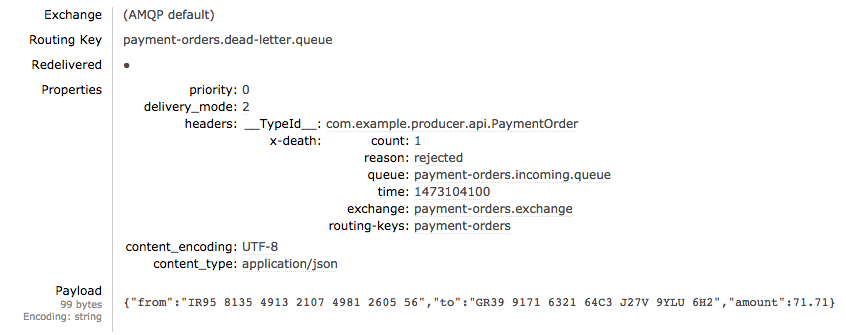

If requests continue to fail retry after retry, we want to collect these failures in a DLQ for visibility and diagnosis. The consumer that received that specific message does not commit the message’s offset, meaning that this message would be consumed again and again at the expense of new messages that are arriving in the channel and now must wait to be read. It can be cumbersome to obtain metadata on the retries, such as timestamps and nth retry.įigure 2: If there is a breaking change in the downstream Payment Service, for instance, unexpected charge denial for previously valid pre-orders, then these messages would fail all retries. Without a success response, the Kafka consumer will not commit a new offset and the batches with these bad messages would be blocked, as they are re-consumed again and again, as illustrated in Figure 2, below. The worst offenders consistently exceed the retry limit, which also means that they take the longest and use the most resources. When we are required to process a large number of messages in real time, repeatedly failed messages can clog batch processing. While retrying at the client level with a feedback cycle can be useful, retries in large-scale systems may still be subject to: For example, if the Payment Service in Figure 1 is experiencing prolonged latency and starts throwing timeout exceptions, the shop service would continue to call makePayment under some prescribed retry limit-perhaps with some backoff strategy-until it succeeds or another stop condition is reached.

From there, each of the two sets of listeners reads the produced event to execute its own business logic and call its corresponding service.Ī quick and simple solution for implementing retries is to use a feedback cycle at the point of the client call. Figure 1, below, models them within two corresponding consumer groups, both subscribed to the same channel of pre-order events (in this case, the Kafka topic PreOrder ): Figure 1: When a pre-order request is received, Shop Service publishes a PreOrder message containing relevant data about the request. In our example, each function is made available via the API of its respective service. #Dead letter queue driverThis is analogous to how a single Driver Injury Protection trip premium processed by our program’s back-end architecture has both an actual charge component and a separate record created for reporting purposes. In this model, we want to both a) make a payment and b) create a separate record capturing data for each product pre-order per user to generate real-time product analytics. For the purpose of this article, however, we focus more specifically on our strategy for retrying and dead-lettering, following it through a theoretical application that manages the pre-order of different products for a booming online business. The backend of Driver Injury Protection sits in a Kafka messaging architecture that runs through a Java service hooked into multiple dependencies within Uber’s larger microservices ecosystem. In this article, we highlight our approach for reprocessing requests in large systems with real-time SLAs and share lessons learned. This strategy helps our opt-in Driver Injury Protection program run reliably in more than 200 cities, deducting per-mile premiums per trip for enrolled drivers. Utilizing these properties, the Uber Insurance Engineering team extended Kafka’s role in our existing event-driven architecture by using non-blocking request reprocessing and dead letter queues (DLQ) to achieve decoupled, observable error-handling without disrupting real-time traffic.

To accomplish this, we leverage Apache Kafka, an open source distributed messaging platform, which has been industry-tested for delivering high performance at scale. Given the scope and pace at which Uber operates, our systems must be fault-tolerant and uncompromising when it comes to failing intelligently.

From network errors to replication issues and even outages in downstream dependencies, services operating at a massive scale must be prepared to encounter, identify, and handle failure as gracefully as possible.

In distributed systems, retries are inevitable.

0 Comments

Once a player reaches a certain level of DPS, it requires a comprehensive program of subtle changes to see any further improvements. Events and PVE queues are effectively used as proving grounds. Gear is created through the reputation system and then upgrading it offers the opportunity to add modifiers. Although featured episodes and events are regularly added to the game, there are no traditional dungeons offering fancy gear as rewards. Once you reach level cap in STO you quickly find that the bulk of the endgame is focused upon experimenting with builds and striving to increase your DPS. Finally, after months of tweaking and customising, I’ve broken the 30K DPS barrier. STAR TREK ONLINE TACTICAL BUILD 2017 UPGRADEI have used a hundred plus and have now managed to upgrade a lot of my gear to Epic quality. However, for me the best item available from the Phoenix Prize Pack is the special Phoenix Upgrade Tech (equivalent to multiple Universal Superior Tech Upgrades, with no Dilithium costs). It’s also nice to be able to finally own the iconic Red Matter Converter, which was only previously available in the Collector’s Edition of STO on launch. I obtained a Kobali Samsar Cruiser last night which allowed me to complete the Kobali Space Set. Furthermore, the current return of the Phoenix Prize Pack has allowed me to spend a lot of my surplus Dilithium. The recent Arena of Sompek Event in STO was immense fun and presented me with an opportunity to fine tune the ground build on my primary character.  |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed